Smarter Learning. Better Performance.

Optimize employee performance with data‑driven workplace learning technologies.

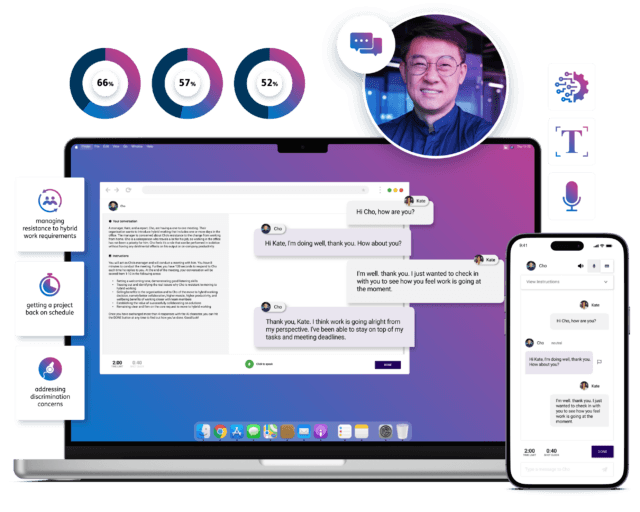

Learning Pool is changing how global businesses solve today’s biggest employee performance challenges

We’re doing it with data-driven learning technologies that apply insights into who a learner is, what they know, and what they need to do. By aligning learning to individual needs and business objectives, we make workplace learning experiences smarter.

Trusted by 1,500+ global companies

What our customers say

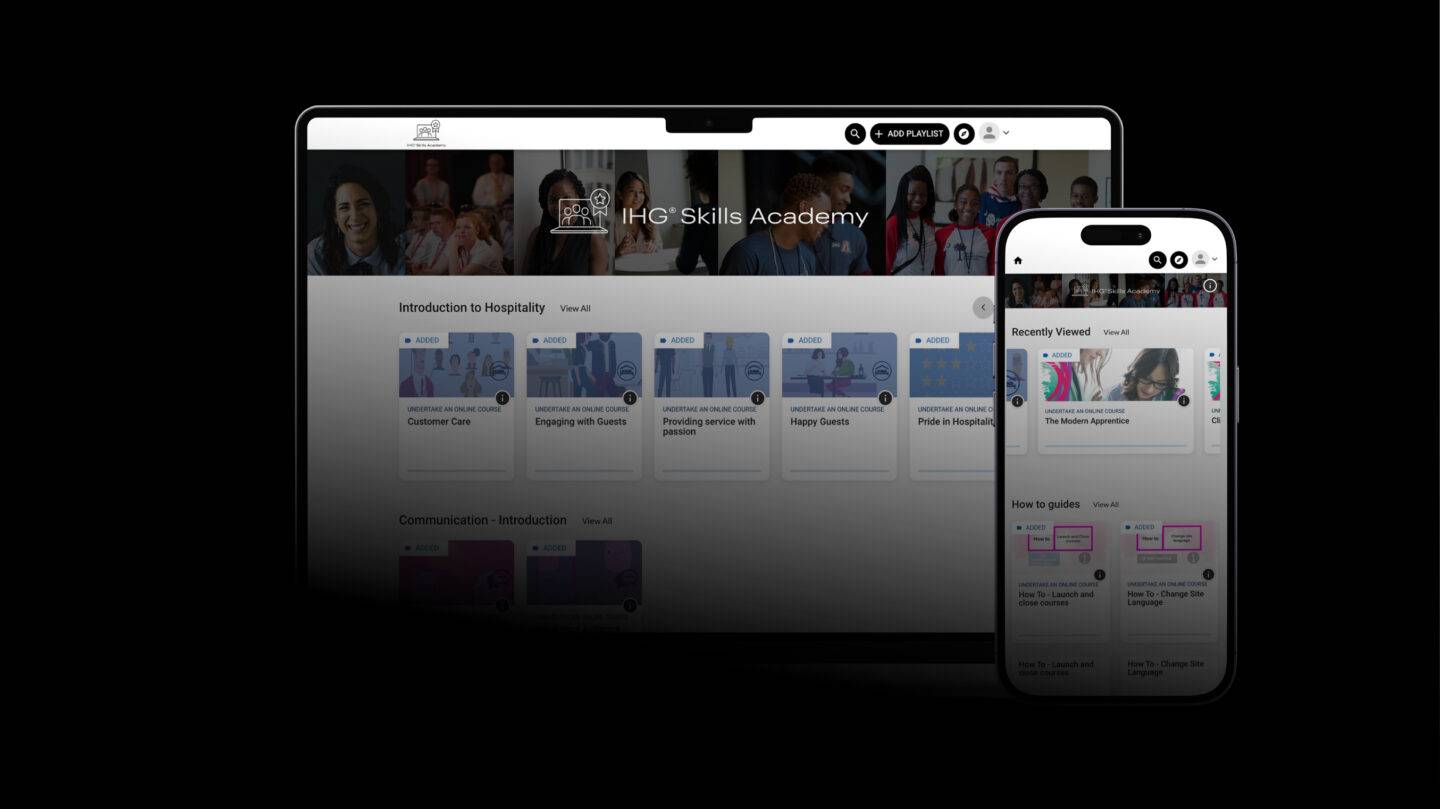

A truly exceptional story

Intercontinental Hotels Group

Industry leader

0

million active learners

0 +

customers worldwide

0 %

recommendation rate

Industry awards

Got an employee performance challenge to solve?

Get in touch to discover how our portfolio of learning solutions can help